The logic module is slowly becoming useful. This week I managed to get

some basic inference in propositional logic working. This should be

enough for the assumption sysmtem (although having first-order inference

would be cool). You can pull from my branch:

`` git pull http://fa.bianp.net/git/sympy.git …

The first task for my Summer of Code project is to write a nice boolean

algebra module. I wanted a clean module that follows SymPy's object

model and that plays well with other objects. In practice this means

that it should inherit from Basic and that it should behave well …

As a prerequisite of my GSOC project I have to do some modifications on

sympy's current logic module (see previous post), so I decided to go out

and take a look at what others are doing in this area. aima-python is

a project that tries to implement all algorithms found …

First post on my GSOC adventure. This year I got accepted in Google's

summer of code program as student, and my job will be to implement the

assumptions framework in SymPy. Although the project officially is

only about implementing an assumptions framework, I have to prepare the

ground before the …

I spent a lot of time trying to figure out why django removed the '+'

character from the POST data retrieved via request.POST. Still don't

know the reason of this behaviour, but using request.raw_post_data

saved my day ...

Después de Mengíbar vino Martos. En este caso se trataba del "Blooms

Day", un sitio para el que "un pub irlandés" es una descripción

aceptable. Llegamos primero Oscar y yo, en una de esas raras ocasiones

en que llegamos antes de tiempo (no hay explicación, simplemente

sucedió), así que tuvimos …

Realmente mi ordenador no hace nada ... sólo le echo la culpa de todo

En la ciudad fabrican a las personas en serie. En el campo, los siguen

haciendo a mano: los moldean, los secan al sol y luego los pintan, por

eso son tan especiales. Primer concierto de la nueva gira en Mengíbar, y

uno de los fines de semana más intensos que …

Mi "sosio" de trabajo, el gran Goyal, acaba de ganar el primer

premio del concurso yodona. Si al final voy a tener que tratarte con

respeto y todo ... cuándo era que lo íbamos a celebrar?

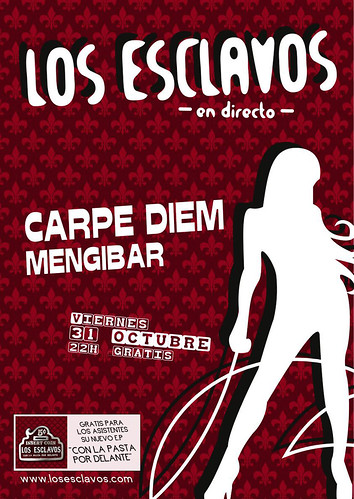

Nuevo repertorio, nuevos arreglos. Nueva gira, nuevos lugares. Sin

olvidarse de los viejos amigos.

Empezamos la gira

esta vez en Mengíbar, y mañana jueves (30 de Octubre) estaremos por la

pecha, celebrando la publicación del EP y el cumpleaños de Hugo!

Felicidades!

La verdad, me parece una suerte tener la Open Source World Conference

tan cerquita.

Este año estuvo genial, sobre todo las exposiciones en el

hall. Lo peor, que a algunas charlas no pude asisitir por falta de

espacio ... bueno, eso y que perdí el autobús de vuelta. Sigo en Málaga …

Por fin encontré la manera para no dejarme la vista intentando

distinguir azul oscuro sobre negro. Se trata de añadir el código a tu

.bash_profile y los directorios se te colorearán de un verde mucho más

legible alias ls='ls --color'

LS_COLORS='di=1:fi=0:ln=31:pi=5 …

Cosas que suceden. Me pierdo el concierto que más ilusión me hace de la

temporada ...

Mientras, estaré en California

en una boda (California el Estado, no el Club de la N-3). PD: algunas

canciones del nuevo EP de versiones ya están en el MySpace.

- Escribir un libro

- Ganar un Grammi

- Beber de una fuente de chocolate (hecho)

Al final el concierto de este sábado no es en la vogue sino en la

sugarpop:

Migue se ha currado unos

carteles estupendos (si te fijas, en el futbolín unos son del Granada y

otros del Jerez xD), y aunque yo estoy de exámenes, estamos echando unos

ensayos para que …

(tb hay un vídeo) Ahora, a seguir con mis

estudios de psicocerámica y de cabeza a por el ig-nobel.

Este viernes (Los Esclavos) tuvimos el privelegio de telonear a Paul

Collins, que se acercó hasta Armilla para dar un concierto en la

Telonera. Para mí, éste fue unos de los conciertos en los que mejor

toqué (tanto que Migue me tuvo que invitar luego a unas copas, aunque

perdí …

Después del concierto en Sevilla, fin de semana esclavo grabando en

Daimiel, en La Mancha. Era mi primera vez grabando con Los Esclavos,

y la verdad es que la cosa no pudo salir mejor, sonando bien y del

tirón, sin mayores complicaciones. Al final serán 4 canciones que se

colgarán …

El pasado finde me fui con los enfermos estos a la capital para medirnos

en una de sus salas: la Costello. El concierto de Madrid fue

francamente increíble, tocamos de puta madre, la gente respondió, y yo

fue de las veces que más me he divertido tocando, aunque la verdad …

Por fin tocamos en Planta Baja!! Fue el pasado sábado 19 de Enero y la

cosa salió bastante bien. Bastante bien teniendo en cuenta que era el

primer concierto sin Aitor, nuestro vocalista y guitarra rítmico. El tío

ya no se junta con nosotros desde que le dejamos una semana …

Mi padre se ha currado un montaje para la canción "Madrid" que tocamos

en la telonera, mezclándolo con imágenes de un ensayo.

Qué pena que los romanos tengan solamente un cuello! Calígula,

emperador romano, famoso por su condicta autoritaria y extravagante

Capítulo I: Yo soy yo

Otro día que cocino espagueti, y joder, cada día me saben peor. Al

principio me lo curraba y solía echarle su poquito de carne, su queso …

To wapo el concierto del pasado 24 de Noviembre en "La Telonera". Sin

duda fué el concierto en el que mejor tocamos hasta la fecha. Esta vez

nos tocaba abrir la actuación, de forma que subimos al escenario a las

10:50 aproximadamente, con el escenario todavía medio vacío. Empezamos …

Bueno, ha habido algunos cambios importantes últimamente. El primero es

que nuestro bajista, Enri se ha roto la mano. Por lo visto estaba en

plena orgía amateur cuando resbaló de la cama y el metacarpio dió contra

el suelo, pulverizándose ambos en un largo invierno nuclear. La segunda

es que …

Por fin tenemos los temas !!!. En principio nos dieron los temas sin

masterizar, de modo que a estos temas les he improvisado yo una

masterización. Cuando los chicos de producciones peligrosas nos lo pasen

con su masterización cabe esperar que estén todavía un poco mejor.